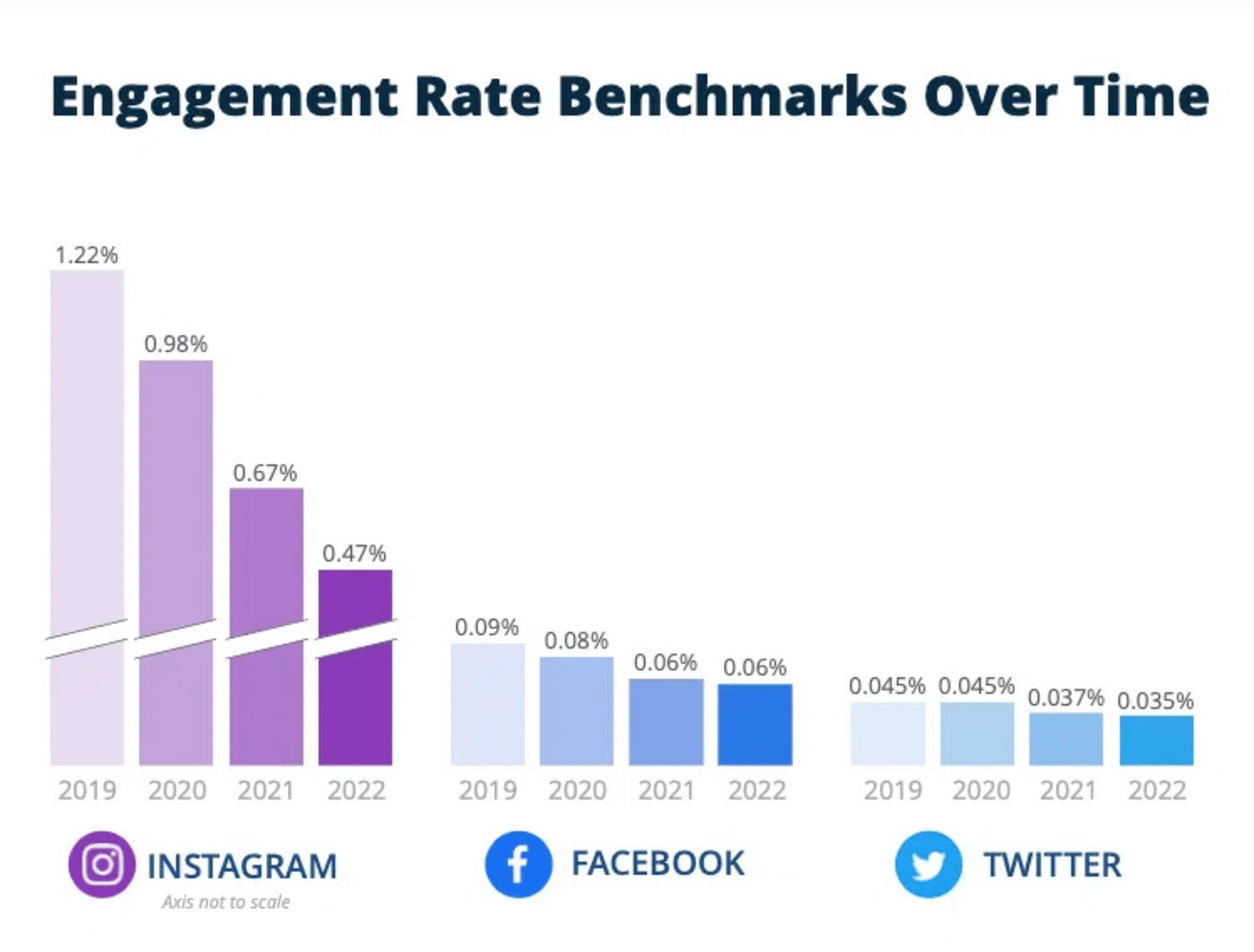

Andrew Chen discovered one of the most powerful laws in marketing: the Law of Shitty Clickthrough. It has a damning verdict: all channels will be decaying over time.

The underlying drivers are straightforward: novelty fades, competitors crowd in quickly, and the winner’s curse kicks in, where scale inevitably pushes you beyond the core customer into lower-intent edges. In a world increasingly saturated with AI, each of these forces speeds up. The result is not just more automation, but a broader acceleration in channel decay as a macro trend.

Adriana Tica, All Marketing Channels Decay with Time (What to Do About it)

When the tide is running against you, the right move is often not to do more of what everyone else is doing, but to step away from the crowd altogether. That is especially true in customer engagement.

Cost Transfer and The Automation Trap

One neglected but brutal reality is that AI-driven automation does not eliminate cost so much as relocate it. What businesses save in effort, customers pay for in attention, patience, and unnecessary work. This shows up everywhere, from customer service to marketing.

Amber Electric is a useful example of the automation trap. The company rode a strong wave of battery demand, but as it scaled, support quality became a visible source of customer pain, despite being supported by AI darling Lorikeet. Amber later apologised for long wait times and billing issues. The automation may have solved part of Amber’s internal scaling problem, but it did not remove the cost from the system. It simply relocated part of that cost onto customers, who had to absorb the delays, the uncertainty, and the effort required to get issues resolved. I know this firsthand: as a former customer who sent over 20 emails and got little back beyond AI slop.

Customer service may eventually be dominated by AI. But the objective remains the same: service quality, not cost reduction. The danger is that cost becomes the easier short-term metric for product teams to optimise, even as the customer experience deteriorates.

The case with marketing is much more complicated. Customer service can still be judged against a relatively clear end state by looking at if the problem was solved and solved well. Marketing has no such anchor. Its outcomes are mediated by channel dynamics, competitive imitation, and customer adaptation. AI may improve the efficiency of any individual campaign while simultaneously degrading the economics of the channel itself. What looks like productivity at the firm level can show up as decay at the system level.

To win in this environment, it helps to borrow one of Jeff Bezos’s enduring strategic principles: return to what does not change, even in the age of AI.

I very frequently get the question: ‘What’s going to change in the next 10 years?’ And that is a very interesting question; it’s a very common one. I almost never get the question: ‘What’s not going to change in the next 10 years?’ And I submit to you that that second question is actually the more important of the two.

In marketing and customer engagement, what does not change is the expectation of genuine understanding and relevance, the need for visible effort and sincerity, and some form of upfront commitment. These are not relics of a pre-AI era. They are precisely the qualities that become more valuable as everything else becomes cheaper to simulate. And in an AI-saturated world, they increasingly form the burden of proof for the business: proof of understanding, proof of work, and proof of stake.

Proof of Understanding

Using AI to do more of what is already happening can be deeply myopic, especially when the current state is deficient by itself. Marketing is one such case.

Today, Marketing has huge deficiencies. It broadcasts to people over large public media formats, starting with radio and television, and now the Internet. For the most part the message that it broadcasts is static, and targets segments of potentially millions of people, rather than individuals.

The first-order use case is obvious: more content, more campaigns, more variants, more targeting, and more touch-points. But that is also exactly why it is unlikely to be where durable advantage comes from. If everyone can generate more messages, then more messages stop being an advantage. They just become the new baseline. This is where proof of understanding starts to matter more.

In growth, understanding is not the same thing as personalisation. Personalisation is mostly cosmetic. It inserts names, companies, recent activities, or demographic markers into an otherwise unchanged message and can make a message look specific without making it useful. Understanding is structural and reflects a model of what the customer is actually dealing with: the constraint they are under, the trade-off they are struggling with, the timing of the decision, and the reason the problem matters now. In other words, understanding is empathy made concrete.

AI is extremely good at simulating personalisation and much less reliable at producing authentic understanding. Simply using AI to scale current marketing practices only reproduces shallowness at lower cost and higher volume. On the other hand, understanding moves away from static segmentation and toward dynamic inference around intent, friction, and timing, and reduces the distance between where they are and the action you want them to take.

That is the real wedge AI creates: it makes fake understanding cheaper, and real understanding more valuable.

Proof of Work

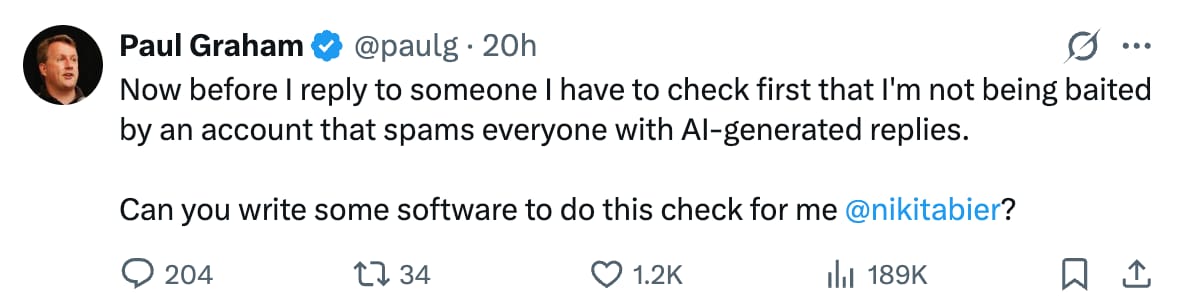

Proof of work is most familiar from crypto, where computational cost protects the network from cheap spam and bad actors. The work is not valuable in itself. Its value lies in making participation costly enough to be credible.

This principle is somewhat transferrable to customer engagement, where businesses need to show that they have actually done something to deserve customer’s attention in a world where attention becomes increasingly expensive to earn.

This has shown up in funny ways. Content with typos can sometimes outperform polished copy, not because mistakes are good, but because imperfections can act as accidental proof of work. They suggest a human was actually there, making an effort to be seen.

I do believe humans still retain some ability to sense other humans. We can often tell, however imperfectly, whether a message carries real attention or was generated at negligible cost. That does not mean roughness should be manufactured as a tactic; customers will spot that quickly and build immunity to it. It means proof of work still matters, even in strange and indirect ways.

In customer engagement, proof of work shows up in two ways: real effort to offer care that is actually specific, and restraint in not taking every opportunity to reach out. The first demands a minimum depth for an interaction to not feel cheap or transactional. The second demands the discipline to not monetise every single touch-point with customers.

Both matter because AI pushes in the opposite direction and optimise for local optimum. It makes it easier to produce more messages, trigger more campaigns, and justify more outreach. But volume is not proof of work. If anything, it often signals the absence of it. Proof of work is what remains after the business has filtered for what is worth saying, to whom, and why now. It is visible not just in the quality of what gets sent, but in the judgment behind what does not.

Proof of Stake

Too often, revenue culture reduces a human being to a number. Programs, outreach, and communication become vehicles for extraction, with the sole purpose of converting the customer into revenue. In the worst cases, this extends to misleading people who were never a good fit in the first place. The mindset is fundamentally one-sided. It treats the interaction as something to take from, rather than something to stand behind. In the long run, that necessarily creates mistrust.

Proof of stake, which is another familiar protocol in crypto, points in the opposite direction. It asks a harder question: what is the business willing to put on the line in order to earn the customer’s trust and business? A business becomes more credible when its incentives are structurally aligned with customer success, and when it bears some downside when the customer loses.

In customer engagement, this can take many forms: genuine education before conversion, honest guidance beyond the immediate service offering, service commitments that survive the sale, or commercial structures where the business shares some of the risk. The common feature is simple. The business is no longer just asking to be believed but making belief less necessary by giving the customer something real to rely on.

Interestingly, proof of stake usually contains proof of work within it. Real stake requires a decision to commit, and decisions with consequences still need to be owned by someone. AI can support that process, but it cannot meaningfully hold the risk itself. That is why proof of stake is harder to fake. Once something real is on the line, some real judgment usually has to sit behind it too.

Many AI automations in customer engagement today are just cost transfer in disguise. What businesses save in effort, customers increasingly pay for in filtering noise, overcoming frictions, and doing unnecessary work. Because these are the most obvious first-order applications of AI, the macro environment is predictably heading in this direction as businesses chase the same easy tactics. The result is a broader enshittification of customer engagement. In that world, being more efficient at generating AI slop will not make a business stand out. What matters instead is meeting the new burden of proof for credibility and engagement worthiness: proof of understanding, proof of work, and proof of stake.